Pervasive Security

Data Center security is difficult. Good design practices dictate one thing, security policies dictate another, compliance regulations dictate yet another. The result is often messy and difficult to explain to anyone outside the initial design team. In many cases, enterprises end up with a data center that looks like one of the three figures below.

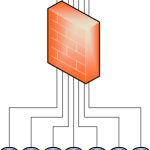

Figure 1 shows a very powerful firewall serving as the default gateway for numerous data segments. Figure 2 is very similar but uses a L3 switch with integrated firewall capabilities instead of a traditional firewall. Figure 3 uses a L3 switch along with a large, transparent firewall that monitors all the necessary segments by way of 802.1Q subinterfaces.

All three of these designs have serious drawbacks related to performance, cost, and management.

- Performance: No firewall in the world can keep up with a modern data center core switch, so adding a firewall in any of the manners shown above will hamper performance to some degree – be it throughput, VLAN limits, connection limits, etc.

- Cost: The best way to mitigate the performance hit is to buy the biggest, fastest firewall you can find. These are expensive boxes by themselves, even without accounting for power, space, and cooling.

- Management: The rulebases on these massive firewalls are unruly at best, and uncontrollable at worst. Introducing a new engineer to the rulebase takes a lot of time, and change control is exhausting because so many applications could be affected by any seemingly innocuous change. Managing these devices gets more difficult as time goes on.

Why do we put ourselves in this situation? Why have we spent decades designing, building, installing, and maintaining these enormously complex security environments?

Because IP leaves us with no choice. The only reasonable way to enforce a pervasive security policy for the last 40 years has been to place a firewall right in the middle of everything.

What would an ideal solution consist of?

- Performance: Smaller firewalls, each only responsible for protecting small subsets of the network, would be able to keep up with the traffic demands.

- Cost: Smaller firewalls cost significantly less, even when accounting for their greater number. Employing virtual firewalls drives the price down even further.

- Management: An automation platform that can easily and effectively control a large number of small firewalls would make management simpler. A GUI-driven platform reduces complexity and the errors that come from CLIs.

Fortunately, there’s been a lot of work in this area by networking vendors recently. Now is the time to take advantage of this new technology and push security to the edge!

First, let’s consider Cisco’s Virtual Security Gateway (VSG). In a virtualized environment, the VSG allows us to deploy a firewall in front of each and every Virtual Machine – without the overhead of processing every packet. Moreover, rules are no longer limited to the standard IP/port attributes. We can create rules based on a VM’s name and other VM attributes.

Imagine that: a VM’s security policy can be defined by its naming convention. All of the policies between web and app servers can be automatically enforced as soon as the VM is built. Changing a server’s IP address wouldn’t require any firewall rule changes. And we can enforce this policy between VMs even if they’re in the same subnet, and on the same host.

This granular, VM-specific policy is only achievable with an intelligent, GUI-based management platform like the Prime Network Services Controller (NSC). Tight integration between NSC and VMWare’s vSphere or Microsoft’s SVCMM allows these policies to be applied immediately as VMs are created, and move with VMs as they migrate from host to host.

Not all servers are virtualized, though, nor will physical servers ever disappear. Cisco’s Application Centric Infrastructure (ACI) provides the same scalable, feature-rich security policies to physical and virtual environments. ACI’s next-generation Nexus 9000 hardware also allows us to deeply integrate business applications’ needs with the network. Imagine QoS policies that are dynamically provisioned when an application instance starts, or security policies that adapt when PCI-compliant servers move between data centers. Both are possible using ACI’s network contoller, the Application Policy Infrastructure Controller (APIC).

Data center equipment is getting smarter and easier to use. Are you interested in taking advantage of these new capabilities? Could you benefit from this kind of design? Talk with OneNeck today.